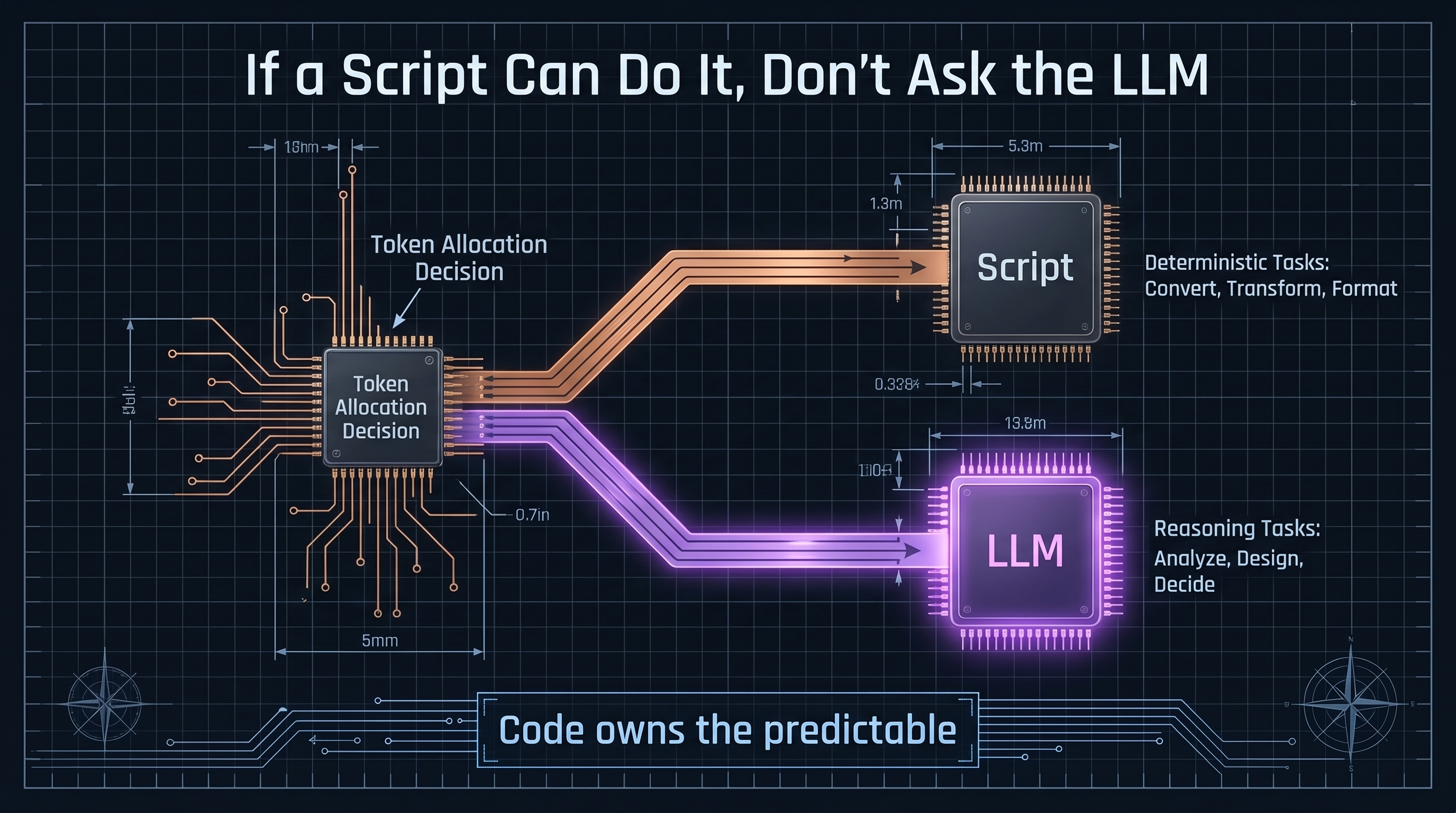

If a Script Can Do It, Don’t Ask the LLM

When your AI system is spending inference tokens to parse date fields, strip PDF formatting artifacts, or convert file syntax — that is not an AI problem. That is an architecture problem. Code can handle all of those tasks deterministically, faster, cheaper, and more accurately than any language model. If your token bill is high and your outputs are inconsistent, the problem is usually not the model. It is what you are handing the model.